# Amazon DynamoDB Persistence

This service allows you to persist state updates using the Amazon DynamoDB (opens new window) database. Query functionality is also fully supported.

Features:

- Writing/reading information to relational database systems

- Configurable database table names

- Automatic table creation

# Disclaimer

This service is provided "AS IS", and the user takes full responsibility of any charges or damage to Amazon data.

# Table of Contents

# Prerequisites

You must first set up an Amazon account as described below.

Users are recommended to familiarize themselves with AWS pricing before using this service. Please note that there might be charges from Amazon when using this service to query/store data to DynamoDB. See Amazon DynamoDB pricing pages (opens new window) for more details. Please also note possible Free Tier (opens new window) benefits.

# Setting Up an Amazon Account

Login to AWS web console

- Sign up (opens new window) for Amazon AWS.

- Select the AWS region in the AWS console (opens new window) using these instructions (opens new window). Note the region identifier in the URL (e.g.

https://eu-west-1.console.aws.amazon.com/console/home?region=eu-west-1means that region id iseu-west-1).

Create policy controlling permissions for AWS user

Here we create AWS IAM Policy to limit exposure to AWS resources. This way, openHAB DynamoDB addon has limited access to AWS, even if credentials would be compromised.

Note: this policy is only valid for the new table schema.

New table schema is the default for fresh openHAB installations and for users that are taking DynamoDB into use for the first time.

For users with old table schema, one can use pre-existing policy AmazonDynamoDBFullAccess (although it gives wider-than-necessary permissions).

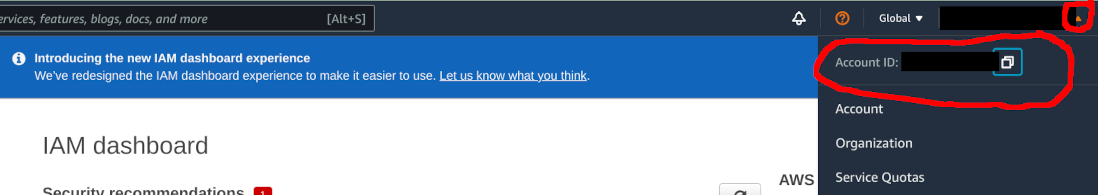

- Open Services menu, and search for IAM.

- From top right, press the small arrow on top right corner close to your name. Copy the Account ID to clipboard by pressing the small "copy" icon

- In IAM dialog, select Policies from the menu on the left

- Click Create policy

- Open JSON tab and input the below policy code.

- Make the below the changes to the policy JSON

Resourcesection

- Modify the AWS account id from

055251986555to to the one you have on clipboard (see step 2 above) - If you are on some other region than

eu-west-1, change the entry accordingly

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "VisualEditor0",

"Effect": "Allow",

"Action": [

"dynamodb:BatchGetItem",

"dynamodb:BatchWriteItem",

"dynamodb:UpdateTimeToLive",

"dynamodb:ConditionCheckItem",

"dynamodb:PutItem",

"dynamodb:DeleteItem",

"dynamodb:Scan",

"dynamodb:Query",

"dynamodb:UpdateItem",

"dynamodb:DescribeTimeToLive",

"dynamodb:DeleteTable",

"dynamodb:CreateTable",

"dynamodb:DescribeTable",

"dynamodb:GetItem",

"dynamodb:UpdateTable"

],

"Resource": [

"arn:aws:dynamodb:eu-west-1:055251986555:table/openhab",

"arn:aws:dynamodb:eu-west-1:055251986555:table/openhab/index/*"

]

},

{

"Sid": "VisualEditor1",

"Effect": "Allow",

"Action": [

"dynamodb:ListTables",

"dynamodb:DescribeReservedCapacity",

"dynamodb:DescribeLimits"

],

"Resource": "*"

}

]

}

- Click Next: Tags

- Click Next: Review

- Enter

openhab-dynamodb-policyas the Name - Click Create policy to finish policy creation

Create user for openHAB

Here we create AWS user with programmatic access to the DynamoDB. We associate the user with the policy created above.

- Open Services -> IAM -> Users -> Add users. Enter

openhabas User name, and tick Programmatic access - Click Next: Permissions

- Select Attach existing policies directly, and search policies with

openhab-dynamodb-policy. Tick theopenhab-dynamodb-policyand proceed with Next: Tags - Click Next: review

- Click Create user

- Record the Access key ID and Secret access key

# Configuration

This service can be configured using the MainUI or using persistence configuration file services/dynamodb.cfg.

In order to configure the persistence service, you need to configure AWS credentials to access DynamoDB.

For new users, the other default settings are OK.

For DynamoDB persistence users with data stored with openHAB 3.1.0 or earlier, you need to decide whether you opt in to "new" more optimized table schema, or stay with "legacy". See below for details.

# Table schema

The DynamoDB persistence addon provides two different table schemas: "new" and "legacy". As the name implies, "legacy" is offered for backwards-compatibility purpose for old users who like to access the data that is already stored in DynamoDB. All users are advised to transition to "new" table schema, which is more optimized.

At this moment there is no supported way to migrate data from old format to new.

# New table schema

Configure the addon to use new schema by setting table parameter (name of the table).

Only one table will be created for all data. The table will have the following fields

| Attribute | Type | Data type | Description |

|---|---|---|---|

i | String | Yes | Item name |

t | Number | Yes | Timestamp in milliepoch |

s | String | Yes | State of the item, stored as DynamoDB string. |

n | Number | Yes | State of the item, stored as DynamoDB number. |

exp | Number | Yes | Expiry date for item, in epoch seconds |

Other notes

iandtforms the composite primary key (partition key, sort key) for the table- Only one of

sornattributes are specified, not both. Most items are converted to number type for most compact representation. - Compared to legacy format, data overhead is minimizing by using short attribute names, number timestamps and having only single table.

expattribute is used with DynamoDB Time To Live (TTL) feature to automatically delete old data

# Legacy schema

Configure the addon to use legacy schema by setting tablePrefix parameter.

- When an item is persisted via this service, a table is created (if necessary).

- The service will create at most two tables for different item types.

- The tables will be named

<tablePrefix><item-type>, where the<item-type>is eitherbigdecimal(numeric items) orstring(string and complex items). - Each table will have three columns:

itemname(item name),timeutc(in ISO 8601 format with millisecond accuracy), anditemstate(either a number or string representing item state).

# Credentials Configuration Using Access Key and Secret Key

| Property | Default | Required | Description |

|---|---|---|---|

| accessKey | Yes | access key as shown in Setting up Amazon account. | |

| secretKey | Yes | secret key as shown in Setting up Amazon account. | |

| region | Yes | AWS region ID as described in Setting up Amazon account. The region needs to match the region that was used to create the user. |

# Credentials Configuration Using Credentials File

Alternatively, instead of specifying accessKey and secretKey, one can configure a configuration profile file.

| Property | Default | Required | Description |

|---|---|---|---|

| profilesConfigFile | Yes | path to the credentials file. For example, /etc/openhab2/aws_creds. Please note that the user that runs openHAB must have approriate read rights to the credential file. For more details on the Amazon credential file format, see Amazon documentation (opens new window). | |

| profile | Yes | name of the profile to use | |

| region | Yes | AWS region ID as described in Step 2 in Setting up Amazon account. The region needs to match the region that was used to create the user. |

Example of service configuration file (services/dynamodb.cfg):

profilesConfigFile=/etc/openhab2/aws_creds

profile=fooprofile

region=eu-west-1

Example of credentials file (/etc/openhab2/aws_creds):

[fooprofile]

aws_access_key_id=testAccessKey

aws_secret_access_key=testSecretKey

# Advanced Configuration

In addition to the configuration properties above, the following are also available:

| Property | Default | Required | Description |

|---|---|---|---|

| expireDays | (null) | No | Expire time for data in days (relative to stored timestamp) |

| readCapacityUnits | 1 | No | read capacity for the created tables |

| writeCapacityUnits | 1 | No | write capacity for the created tables |

Refer to Amazon documentation on provisioned throughput (opens new window) for details on read/write capacity.

In case you have not reserved enough capacity for write and/or read, you will notice error messages in openHAB logs.

DynamoDB Time to Live (TTL) setting is configured using expireDays.

All item- and event-related configuration is done in the file persistence/dynamodb.persist.

# Details

# Caveats

When the tables are created, the read/write capacity is configured according to configuration. However, the service does not modify the capacity of existing tables. As a workaround, you can modify the read/write capacity of existing tables using the Amazon console (opens new window).

Similar caveat applies for DynamoDB Time to Live (TTL) setting expireDays.

# Developer Notes

# Updating Amazon SDK

- Update SDK version and

netty-nio-clientversion inscripts/fetch_sdk_pom.xml. You can use the maven online repository browser (opens new window) to find the latest version available online. scripts/fetch_sdk.sh- Copy printed dependencies to

pom.xml. If necessary, adjust feature.xml, bnd.importpackage and dep.noembedding as well (probably rarely needed but it happens (opens new window)). - Check & update

NOTICEfile with all the updated, new and removed dependencies.

After these changes, it's good practice to run integration tests (against live AWS DynamoDB) in org.openhab.persistence.dynamodb.test bundle.

See README.md (opens new window) in the test bundle for more information how to execute the tests.

# Running integration tests

When running integration tests, local temporary DynamoDB server is used, emulating the real AWS DynamoDB API. One can configure AWS credentials to run the test against real AWS DynamoDB for most realistic tests.

Eclipse instructions

- Run all tests (in package org.openhab.persistence.dynamodb.internal) as JUnit Tests

- Configure the run configuration, and open Arguments sheet

- In VM arguments, provide the credentials for AWS

-DDYNAMODBTEST_REGION=REGION-ID

-DDYNAMODBTEST_ACCESS=ACCESS-KEY

-DDYNAMODBTEST_SECRET=SECRET

--add-opens=java.base/java.lang=ALL-UNNAMED

The --add-opens parameter is necessary also with the local temporary DynamoDB server, otherwise the mockito will fail at runtime with (java.base does not "opens java.lang" to unnamed module).